H=the host C=Chris Blackburn

Welcome to Connected World, a podcast for engineers to learn more about trending topics influencing the connected world and technologies turning today’s impossible into tomorrow’s awesome.

H: Hello, welcome to CONNECTED World, a podcast from TE Connectivity. I’m your host today, Tyler Kern. We’ve heard a lot about emerging technologies, like artificial intelligence. But what exactly is AI? How does it impact our lives currently? What will it do in the future? Joining me on the podcast today is Chris Blackburn. He is a technologist on the system architecture team at TE Connectivity. Chris, thanks very much for joining me.

C: Yeah, thanks, Tyler, thanks for having me.

H: Absolutely, absolutely. Chris, let’s start off from the basics. How do you define what artificial intelligence is and what it does?

C: AI is everywhere around us in the world. I think most of us are familiar with things at home, like voice assistance, like Alexa and Siri. And of course, as we join social media, we are constantly given content that is curated to us. We are shown products that we’ve clicked on, or have seen in the past. So AI is absolutely embedded in those realms. And really what it is, it’s a way for machines to learn themselves and generate data by themselves, so learning our behaviors and providing us data that we want. And again, there are so many different applications here, a lot of cool stuff happening in the space.

H: You mentioned this kind of smart home devices. I wouldn’t be able to cook without Google home display that we have in our kitchen, right? You are right, there’s AI all around us. That’s a great point. I have a two-part question next. What have been some of the primary uses for AI? And what are some of the emerging applications you are seeing in the space?

C: Some of those primary use cases are really in the marketing realm, and again making sure that products we are interested in are reaching consumers. So if I purchase a certain product on Amazon, you know Amazon learns my purchase history and may suggest future products. For example, you might see other customers bought this, because you bought certain products. So I think in that regard, recommendation engines are really one of those primary use cases. One of the changes that we see here, though, and interesting aspects, are what I call remote and harsh environments, even some applications that require extreme data privacy. These are things like oil and gas exploration, mining and natural resource extraction, and the really important things like farming, food production, water access and sewage control.

H: That’s really interesting. I’ve heard something said about AI in the past and I am curious just about whether or not it’s correct, let’s say. I heard in the past that people say that AI is only as good as the data you are feeding it. Is it true? Can you explain how AI gets built and how it works, that sort of thing?

C: Yeah, absolutely. When we feed data to a neural network. We call this machine learning or training, and that’s absolutely true the data in really is what we have to use to train these models. If you have a small data set or a poor data set, your models are not going to be that great. But one of the interesting things is that we train that model, and then we deploy that to the actual use cases. This is what we call inferencing. Now that I have this model trained, I bring new data to it and it infers from this data that from the data that I had, this is what I would expect. The most interesting thing about AI is that the cycle is not one way. It’s a repetitive cycle. As AI infer from new data, I am constantly building and compiling my data set. So the more we use features like this, the more things I ask Alexa, the smarter it gets. This is really machine learning at its core.

H: That’s really interesting. So as you get a wider and larger sample size and it has more data that it has collected, it gets smarter and provide maybe better recommendations, or it puts out better conclusions based on the fact that you are giving it more data and it’s able to take that and make smarter decisions.

C: Absolutely, absolutely. You know, this is of course great. We get more precise models, but there are also challenges that come along this: where how I am going to store this massive amount of data, how I am going to transfer this from edge data centers and traditional cloud data centers. Just this massive amount of data that’s being generated. In the past, we go back 10, 15 years ago, a lot of data that was created in the world was human-generated data from input, from our keyboards or recordings, film, things like that. And now we are moving into this era of machine-generated data. It’s just absolute data explosion, that certainly influencing how we’re building system architectures and handling this now.

H: Absolutely. So tell me something about the efforts being made on the hardware side, because it sounds like developments are taking place there as well.

C: Absolutely. I think one of the key things here is the migration of compute, storage and these AI accelerators towards the source of the data. So typically we have these large data centers. We pin that network and that data is moving to and from those data centers and within those data centers. Now we are starting to see those compute resources and storage resources moved closer to where folks and these machines are generating the data. And this is really the convergence of what we call edge computing with the IoT, or Internet of Things, end-points, devices. 5G has been an absolute factor in transmitting this data over these newly defined networks.

H: This podcast is called Connected World, right? We’ve heard so much about 5G and how it’s going to change the world and how it’s going to allow free sharing of data and much more rapid rates and that sort of thing. How does 5G impact the development of AI and the growth of it on a wider scale?

C: We’ve talked about this repetitive cycle of training these models and then inference and deploying these models. As we look at 5G, I now have very fast communication right at the data source to and from things. So, things like the voice assistance we’ve talked about or all the apps on smartphones, from search and social, and all these things, we’re going to get more real-time, faster responses. The population doesn’t even want to wait a second or two for information anymore. And that information needs to be on demand. 5G is a key enabler to make that communication as fast as possible.

H: So Chris, what challenges do you see are presented for engineers by the latest trends in AI and machine learning? What kinds of things are engineers really working on and maybe hurdles need to be overcome as they continue to work in the field of AI and machine learning?

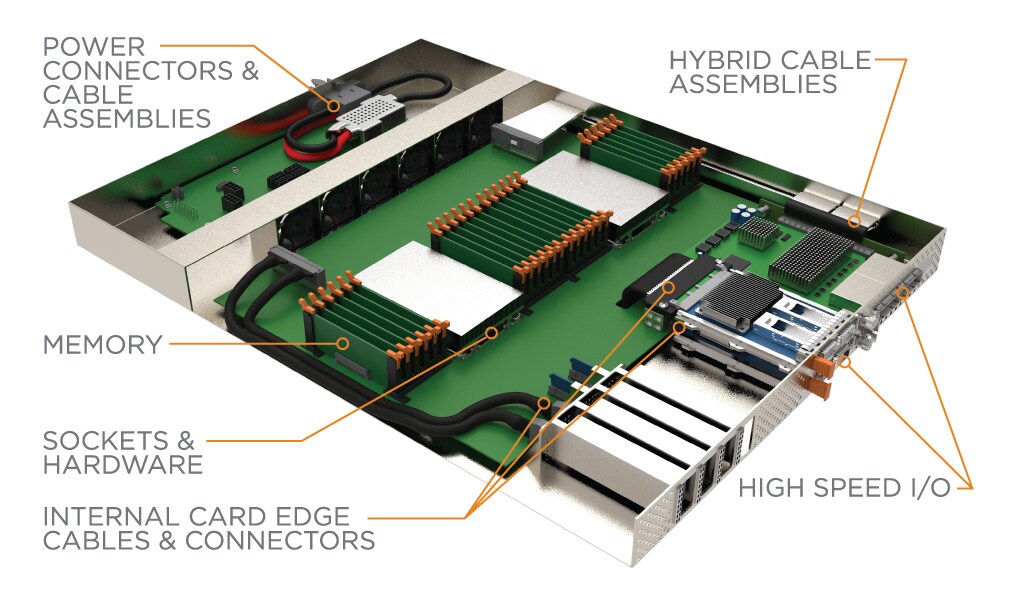

C: Let’s start with power. Power is one of those things that is a finite resource. When we look at the footprint of a cloud data center, there’s only so much power that can come into that building and be consumed. So right now these data centers are not as sustainable as we like them to be. So there’s this trend of sustainable data centers and renewable energy at these data centers. But we look at these AIs, specific devices, whether they are GPUs or different ASICs, they are very power hungry and consume quite a bit power. So we have this sort of power ceiling, so power delivery. On the chip side, make sure these GPUs and ASICs are power efficient. That’s certainly one area that the engineering community is tasked with solving and is quite challenging. The other side is scalability. So I talked about all of these different end sites, edge data centers, cloud data centers, how do we scale these systems at mass scale? And we think companies like Microsoft, Amazon, Facebook and Google. We call these the hyperscale. And their infrastructure is just insanely large. When I go from the mechanical stack of the circuit boards, servers, cables, connectors, how do I integrate all of this in a way that it makes sense? And just scaling these data centers and systems is an extreme challenge.

H: Definitely, definitely. What developments in the AI are you particularly excited to see? As things continue to move forward, what excites you these days?

C: I think healthcare is something that is just absolutely awesome, like telemedicine, regular check-ins with the elderly population. Doctors can read X-rays, or look for different cancers just through image and looking at these images. That is the stuff that is absolutely human necessity. AI can help us human population live longer, live healthier. I mentioned earlier some of the food production and water access and things like that. But of course there’s fun side of things, too. We all have filters on Instagram or Snapchat. That’s the direct result of AI. Look at image classification and things like that. I have a particularly fun story here. Google has a product called Arts and Culture. You are able to take a picture of yourself and upload that. There are TPU clusters that take a look at that image. They match it to a corresponding image of Renaissance paintings. This matched my face to an image of Simon George of Cornwall, which was drawn in the 1400s. I now use this thumbnail in all of my business to busines communication like Microsoft Teams, Skype and things like that. Definitely have a little bit fun with it.

H: That’s awesome. Do you also have a beard like Simon George of Cornwall?

C: Yes, same beards, spot-on and the same hat.

H: Oh, and the same hat, good. You got to complete the look, you got to round the whole thing out for sure. As you were talking about the big players in AI, one of the names you mentioned, it’s kind of stood out for me, because it sounded different from others is BMW. Can AI and will AI play a big role when it comes to, maybe autonomous vehicles in the future?

C: I talk about these large complex data sets and you know your model is only as good as the data you are putting in and training with. Nvidia is absolutely entrenched in this driverless car and autonomous vehicle push. But of course, the auto manufacturers like BMW, Toyota, both luxury and standard vehicles. I think what we are seeing is these technologies from the data centers really make their ways into the cars. When we look at what’s in the car now, it’s a variety of these sensors and cameras and things. When I look at how much data a vehicle is collecting, a driverless car is collecting, you know it’s just massive amounts. Recently Nvidia have some figures out talking about 400 petabytes of raw data with 100 vehicles a year. When we look at the safety and how we view these autonomous vehicles, we want to put them through every possible scenario, speed up around the blank corner, put a pedestrian out there, how does this vehicle react. The more situations you put this in, the better we can train and tune these models that such are safe and reliable solutions for transportation.

H: So, bring it home for us some examples about how this is going to change our lives or change the world we know? You mentioned healthcare. That’s obviously a big one, food production, things along those lines. But how might our lives look different in ten years because of further growth in AI?

C: I mentioned some of those key fields. We talked a little bit about transportation. Another one of those is finance. So, where should we put our money for retirement and how should allocations be readjusted? The fintech sector is absolutely booming and trying to make these real-time decisions, how to handle our money? So really, absolutely every single field is going to be impacted by AI, from manufacturing to transportation, pharmaceuticals. We look at like drug research and discovery. I can now quickly iterate so many different possible combinations and drive towards these new solutions very quickly. They say “fell fast, fell often”. I think that’s come to play here.

H: My last question is a self-serving one, because AI can help me with my upcoming Fantasy Football draft.

C: I think so. They may not be right, but based on what everyone else picked, or let’s say who the winner from last year picked, you just copy those choices.

H: That’s good. I need all the help I can get these days. I have to save face among my friends.

C: Absolutely.

H: Excellent. Chris Blackburn. He is a technologist on the system architecture team for TE Connectivity. Chris, thank you so much for joining me today and talking about some of the applications of AI, where things are moving in the future, giving us a broad overview of the industry and everything that’s going on. Thank you for joining us.

C: I had a blast.

H: Absolutely. I did this as well. I hope all of you enjoying listening to this episode. Of course, this podcast is called Connected World. It’s a podcast from TE Connectivity. If you’ve not already subscribed on Apple podcast or Sportify, make sure you go subscribe to stay up-to-date with everything that’s going on in the connected world with advancing technologies. Of course, we will be back soon with more episodes in the podcast. But until then, I will be your host today, Tyler Kern. Thanks for listening.

e

e

e

e

e

e